Detect AI-Generated Content (Tools + Techniques That Work)

October 3, 2025The Future of Artificial Intelligence in Global Innovation

November 8, 2025Your phone books a doctor visit before you feel sick. Traffic lights sync so ambulances never stop. Your inbox sorts itself, then drafts replies you would write. That is the promise people tie to AGI.

AGI means a system that can learn and reason across many tasks, like a person. It would switch goals, pick up new skills, and explain its steps. That is far beyond today’s narrow tools.

Will it happen soon? Most expert polls group around the 2040s to 2060. Some builders say the early 2030s. No one has proved it yet, but progress is clear.

Why this matters to you: tech teams need to plan hires, compute, and risk. Policymakers need guardrails that protect people and still allow gains. Health leaders want safe tools that aid care, not add bias or harm. Clear terms help all three groups act with less noise.

In this guide, you will get plain facts, not hype. We will cover what counts as AGI, what does not, and why that line matters. We will share signals to watch, from model tests to real use in labs and clinics. You will leave with a short map for choices you face now.

This post draws on expert views and recent updates as of October 2025. We keep the tone clear and the claims tight. Let’s get to the core question, what AGI means and when it might arrive, then build from there.

What Does AGI Really Mean?

AGI means a system that can think across tasks. It learns fast, adapts, and explains its choices. It is not a single trick. It is a stack of skills that work in new settings without retraining. For context on how experts frame this, see Google’s overview of AGI’s generalization and transfer of skills in new domains in What is artificial general intelligence (AGI)?.

Key Features That Set AGI Apart

AGI stands out when four abilities show up together. Think of a skilled colleague who can switch roles on short notice and still do the job well.

- Perception across contexts:

What it is: The system parses the world in many formats. It links text, images, sound, and data.

Why it matters: Real problems mix signals. A flood report is words, maps, and sensor streams.

Example: In disaster response, AGI reads satellite images, hears radio chatter, and scans posts. It flags a safe route for rescue teams in minutes.

Contrast: Narrow tools excel on one format, then stumble on mixed inputs. - Learning from little data:

What it is: The model picks up new skills from a few examples or a short demo.

Why it matters: You do not always have a giant dataset.

Example: A rural clinic gives five sample cases for a rare rash. AGI spots the pattern, then builds a simple triage guide.

Signal: Few-shot or one-shot learning means you get value fast, with lower cost. - Natural language use:

What it is: Clean dialog, grounded answers, and the ability to ask clarifying questions.

Why it matters: Most work runs on talk and text. Clear dialog reduces errors.

Example: In diplomacy, AGI tracks idioms, honorifics, and local taboos. It helps craft a trade memo that reads as fair to both sides. It notes that a phrase that sounds fine in English can insult a partner in another culture.

Guardrail: Source links and step-by-step notes help users trust the output. For a broad primer on how AGI spans tasks and maintains control, see AWS’s guide, What is AGI?. - Self-improvement:

What it is: The system tests, critiques, and upgrades its own methods. It closes loops without waiting for a full retrain.

Why it matters: Gains compound. The model fixes its weak spots, then builds on that fix.

Example: In a lab, AGI designs a new assay, runs a virtual test, adjusts the protocol, then proposes a cheaper reagent. It logs changes and expected gains.

Risk and payoff: Faster cycles can raise both value and stakes. For a broader view on self-improving systems and the pace of change, see Self-Evolving AI and the Intelligence Explosion.

Here is a quick way to spot these features at work:

- Cross-domain transfer: Skills move from coding to policy drafts, or from biology to supply chains.

- Grace under uncertainty: The system can act with partial data and still explain its choice.

- Value-aligned dialog: It asks what you care about before it optimizes.

- Transparent loops: It shows tests, results, and why a change helped.

Consider a diplomatic summit. Perception lets AGI track tone shifts. Few-shot learning tunes to a new protocol in a day. Natural language fluency keeps talks smooth and face-saving. Self-improvement means it reviews outcomes after each session, then updates playbooks for the next round.

Or think about a city’s health unit. The system joins clinic notes, pharmacy stock, and air readings. It sees a rise in asthma risk, drafts alerts in plain language, and proposes targeted fixes. It then checks what worked last week and refines the plan.

AGI is not magic. It is a blend of these core skills, used together with care. When you see them in one system, across tasks, you are closer to the real thing.

How AGI Stands Out from Today’s AI Tools

Most tools you use today are narrow. They excel at one task with clear input and fixed rules. AGI would not be boxed in like that. It would move across tasks, learn new skills on the fly, and explain choices in plain terms. Think of it as the jump from a pocket tool to a full workshop.

Examples of Narrow AI in Action Today

You use narrow AI every day, even if you do not notice. It helps, but it does not think across tasks or set goals. Here are common uses, plus where they stop short.

- Recommendation engines

Netflix and YouTube suggest what to watch next. Amazon suggests products. They score patterns and rank options. They do not know your mood or long-term goals. They cannot plan a weekend or balance your time. - Email spam filters and smart replies

Gmail filters junk and drafts replies. It labels based on patterns in text. It cannot read your workplace context or legal risk. It will not defend that reply in a meeting. - Voice assistants

Siri and Alexa set timers and answer quick facts. They follow scripts and skills. They cannot manage a complex project with unclear roles. They also fail when requests mix tasks and nuance. For a wider tour, see these practical examples of narrow AI. - Search ranking and ad targeting

Systems match queries to pages and target ads. They predict clicks, not truth. They do not check long-term outcomes or fairness without extra rules. They optimize for engagement, not wisdom. - Fraud detection

Banks flag odd card use. Models watch for anomalies in time, place, and spend. They can catch fraud fast. They also hit false positives when your travel pattern shifts. - Self-driving stacks

Cars perceive lanes, signs, and objects with sensors. They plan paths in known rules. They struggle with rare events or chaotic scenes. They do not reason like a human about intent or culture on the road. - Medical imaging models

Classifiers spot signs in scans, like nodules or bleeds. They assist, not decide. They cannot take a full patient history, set goals for care, and weigh trade-offs. - Customer support chatbots

Bots answer common questions and route tickets. They break when issues cross teams or policy. They do not own outcomes or negotiate exceptions. - Content moderation

Filters detect hate speech or threats. They work on clear patterns. They miss context, satire, or coded language. They need human review for edge cases. - Industrial predictive maintenance

Models forecast part failures from sensor data. They plan fixes on a schedule. They do not redesign the machine or rewrite supply contracts when parts run short.

Why this matters: each tool is a narrow expert. It shines in its lane, then trips outside it. Models fail with new data that does not match training. They do not transfer skills from movies to medicine, or from chat to logistics. They also do not set goals, ask clarifying questions, or track values across roles.

AGI aims for the gaps that hold back these tools.

- Transfer: Move skills across domains without retraining on huge new sets.

- General reasoning: Form plans with incomplete data. Explain steps and limits.

- Value alignment: Ask what you care about, then optimize for that.

- Self-improvement: Test, critique, and update methods in tight loops.

If you want a quick map of current use cases, this roundup of narrow AI examples like recommendation engines and spam filters gives a solid scan. It shows how many tools help with single slices of work.

AGI stands out by stitching those slices together. It would bridge tasks, not just automate them. It would pull from many signals at once, learn fast from small hints, and carry lessons to the next job. That is the step from strong tools to a true partner.

The Road to AGI: A Quick History

The path to AGI runs through bold ideas, long winters, and sharp rebounds. Each era set a clue for what smart machines might need next. Here is how the field moved from hope to setbacks to real gains.

A stylized look at the 1956 Dartmouth meeting where the term “AI” was coined. Image created with AI.

A stylized look at the 1956 Dartmouth meeting where the term “AI” was coined. Image created with AI.

Early Dreams and First Steps

In the 1950s and 1960s, a small group set a big goal. At the 1956 summer project at Dartmouth, scholars coined the term “artificial intelligence.” Their claim was bold: machines could match key parts of human thought. The plan was loose, the energy high. You can read a short account in Dartmouth’s own summary of how the field took shape at the time in Artificial Intelligence (AI) Coined at Dartmouth.

What did early work look like? Logic programs solved toy proofs. Simple chat systems like ELIZA mimicked talk with tricks. Chess programs learned opening books by hand. Rules and symbols drove the field. The idea was clear. If we could write enough rules, the machine would think.

By the 1970s, cracks showed. Real life did not fit neat rules. Vision, speech, and common sense all fought back. Systems broke once inputs turned messy. Funding cooled, then fell. The field hit its first “AI winter.”

The 1980s brought new hope with expert systems. These tools captured human rules for narrow tasks like diagnosing faults. Firms built big rule bases and sold them as smart help. It worked for a while, then costs rose and errors grew. Rules clashed, upkeep dragged, and gains slowed. Another winter hit. A brief history of this cycle is outlined in this overview of AI winters and their causes.

Why these winters shaped the field:

- Mismatch with the real world: Rules did not scale to noise and surprise.

- Data hunger: Systems needed more examples than teams could hand code.

- Maintenance pain: Each new rule broke old rules and raised costs.

- Overpromises: Hype outran results, so funding and trust fell.

The lesson was blunt. Smart behavior needs learning, not just rules. It needs methods that bend with data, handle mess, and improve with use. Those lessons set the stage for the next wave.

Breakthroughs in the 2000s and Beyond

In the 2000s, machine learning took the lead. Instead of writing rules, teams trained models on data. The web poured out text, images, and clicks. Cloud storage got cheap. GPUs made training fast. Big datasets met big compute. Results jumped.

Deep learning stacked layers of simple units. These layers learned features on their own. Vision got a boost with ImageNet, speech error rates dropped, and translation improved. Then, in 2016, AlphaGo beat Lee Sedol in Go. That match showed how search, learning, and planning could combine. For a policy view on why that moment mattered, see this recap from ITIF on how deep learning and AlphaGo marked a turn.

What made this phase different:

- Data at scale: Logs, sensors, and web text fed training.

- Compute growth: GPUs and later TPUs sped up learning.

- Better algorithms: Reinforcement learning, transformers, and optimization tricks pushed past old limits.

- Open tools: Shared code and papers spread ideas fast.

From 2018 on, large language models learned to write, code, and pass exams. Vision-language models tied text to images. Multi-agent systems began to plan and simulate. Labs built tools that could generalize across tasks with few examples. Some signs looked like early steps toward AGI.

So, are we close in 2025? Opinions split. Some see a steady march. They point to agent tools, self-play, and reinforcement learning as the next push. A recent debate on this path asks if reinforcement learning could carry us to AGI. Others urge caution. They note gaps in reasoning, memory, and real-world grounding. The New York Times offers a clear take on why AGI may be farther off than leaders claim.

What holds us back now:

- Reasoning under pressure: Models still trip on multi-step logic without aids.

- Long-term memory: Context windows help, but stable memory is hard.

- Grounding in the world: Text is not the world. Acting in it is harder.

- Safety and control: Systems can go off track without clear goals and checks.

What points to progress:

- Tool use: Models call tools, browse, and execute code to get facts right.

- Self-correction: Critic loops cut errors and teach new moves.

- Synthetic data: Simulated tasks can train skills with fewer real samples.

- Multi-agent play: Teams of models plan, debate, and split work.

If the 1950s gave us hope, and the 1980s gave us a warning, the 2010s and 2020s gave us a way to scale. Today’s systems learn from data, adapt, and combine skills. They are not general minds yet. Still, the curve is rising, and the tools are sharper than ever.

Where We Stand Now: Progress Toward AGI in 2025

AGI talk feels louder in 2025. The progress is real, but uneven. Labs chase systems that plan, remember, and act. Tool use, agent teams, and self-checking loops lead the way. The big question is not only who wins, but which ideas scale with care.

Here is where the major labs point the field, and how their bets map to AGI goals.

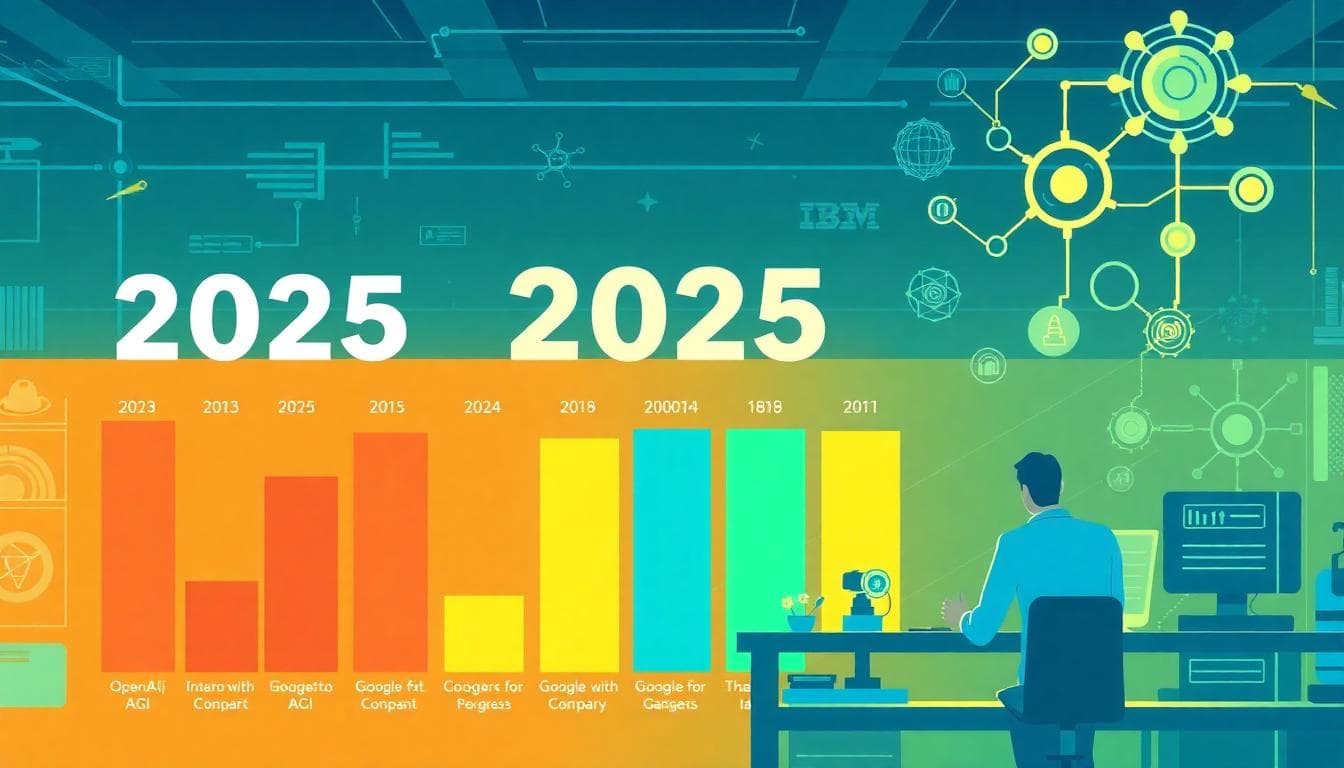

Big Players and Their Latest Work

The largest labs share a few aims. They want models that can reason, call tools, and test their own steps. They also try to reduce errors with safer training and tighter oversight. Here is how OpenAI, Google, and IBM push the line in 2025.

- OpenAI: agents, tools, and science

OpenAI leans hard on agent skills. That means models that can plan tasks, call APIs, write code, and check results. The goal is clear. Turn chat into real work across apps and data. The lab also ties model use to science, like code tools for research and complex math. You can see the wider debate on how to judge progress in this piece on how we might recognize AGI and measure it.

How this helps AGI goals:- Generalization: A single model shifts from email to code to data queries.

- Tool use: Browsing, code exec, and simulators cut errors and fill gaps.

- Self-correction: Critic loops reduce hallucinatory steps and tighten plans.

What to watch: stable memory across tasks, live data grounding, and safe auto-actions.

- Google (DeepMind): multimodal reasoning and long context

Google pairs large models with long context windows and strong search links. Their work joins text, vision, and audio. The push is to hold long plans in mind and use fresh facts. Alpha series work, like protein folding and theorem tools, shows skill in domains with rules and feedback. That mix, planning plus external tools, is key for AGI.

How this helps AGI goals:- Reasoning: Better chain-of-thought with tool calls during hard steps.

- Grounding: Search and code tools test claims before acting.

- Transfer: Wins in math and science move to policy and ops tasks.

What to watch: fewer shortcuts in reasoning, better real-time updates, and stronger safety checks when models act.

- IBM: enterprise agents and hybrid methods

IBM drives agent tech for real workflows. Think of systems that read docs, call apps, and track steps with audit logs. Their writing stresses what agents can do now and what they cannot. For a sober view, see IBM’s take on AI agents in 2025, expectations vs. reality. IBM also backs hybrid methods that blend neural nets with rules where it helps. That can steady models in high-stakes settings.

How this helps AGI goals:- Reliability: Guardrails and logs make agent plans traceable.

- Compliance: Clear steps ease audits in banks, health, and public work.

- Composability: Many small tools form one larger, goal-driven agent.

What to watch: smoother handoffs between learned parts and rule-based parts, plus richer memory over long jobs.

What ties these efforts together:

- Agents, not just chat: Models plan, act, and reflect. This cuts busywork and tests reasoning under pressure.

- Tool use as a backbone: Browsers, code, search, and simulators plug holes in model knowledge.

- Safety as design: Sandboxes, rate limits, role prompts, and human checks come first.

Where are the gaps? Long-term memory is brittle. Models still guess when stuck. Real-world action needs identity, permissions, and strong logs. Labs know this. They are now shipping features that slow models down at risky steps, and speed them up when they can prove facts.

One more signal in 2025 is talent flow. Top teams move across labs and startups to speed AI for science and industry. That churn hints at a race to apply agent skills to hard research. The New York Times covers this shift in top AI researchers leaving big labs to build new teams.

Key takeaways for readers who plan:

- Expect agent-first tools that chain tasks and show their work.

- Plan for governance baked into the stack, not bolted on later.

- Invest in evaluation, not only benchmarks, but real process checks.

- Watch memory and autonomy, since both raise impact and risk.

We are not at AGI. But the pieces are snapping into place. When models plan, call tools, and correct themselves, they start to act more like partners than parrots. The winners will be those who pair that power with care, audit, and proof.

When Will AGI Arrive? Expert Predictions and Timelines

Forecasts vary. Some leaders call for a near-term leap. Many researchers expect a longer wait. The spread reflects different bets on scale, compute, and fresh ideas. Here is a clear scan of what optimists and skeptics see, and what could tip the balance.

A visual timeline of AGI predictions from near-term to mid-century. Image created with AI.

A visual timeline of AGI predictions from near-term to mid-century. Image created with AI.

Optimistic Views: Why 2025 Might Be the Year

The bullish case says the pieces are already here, just not assembled at full scale. You hear this in comments from builders and investors who watch compute curves and model behavior every day. Sam Altman hints at a step-change as agents take on real work, not just chat. See his views in this overview on how Sam Altman is thinking about AGI and near-term superintelligence.

Why some say 2025 could surprise:

- Scale keeps working: Bigger models gain skills with more data and compute. Scaling laws still point to steady gains. Tool use and code execution patch gaps in pure text training.

- Agents mature: Models plan tasks, call APIs, and check their work. That looks closer to general problem solving than prompt-and-reply.

- Synthetic data supply: High-quality synthetic tasks can boost rare skills. This cuts data bottlenecks and speeds learning.

- Long context and memory aids: Expanding context windows let models hold plans longer. External memory stores help keep state across steps.

- Tighter evals: New tests for generalization, tool use, and safety sharpen progress checks. See how to judge AGI in IEEE Spectrum’s framing of recognition and benchmarks.

What could still break the 2025 case:

- Reliability: Small error rates compound across long plans. One bad step can ruin a chain.

- Safety and control: Autonomy raises risk if goals or constraints drift. Guardrails must scale with power.

- Hardware and energy: Training and serving costs can spike. Supply chain issues slow rollouts.

- Overhang myths: Assuming a hidden jump from a secret model can mislead teams and the public.

A few writers even suggest a singularity within the next year or two. Treat that as a stress test for your plans, not a lock. For a sample of that view, see Popular Mechanics on claims for a near-2026 horizon in singularity predictions as early as 2026.

Bottom line for optimists: watch for agent work on live systems, not demos. Look for stable memory, tool chains with audits, and fewer silent failures.

Cautious Takes: Longer Roads Ahead

Most surveys still point later. Many researchers place a 50 percent chance between 2040 and 2050. Aggregations of thousands of public forecasts support this middle view. See a data-rich rollup of expert timelines in 8590 AGI predictions summarized.

Why the timeline stretches:

- Reasoning depth: Models mimic steps, then slip on hard logic. They need causal models, not surface patterns alone.

- Robust memory: Long jobs need true working memory, not just longer context. State must persist and stay clean.

- Embodiment or richer grounding: Text is a thin slice of the world. Acting in real or simulated space teaches limits and physics.

- Sample efficiency: Humans learn from few examples. Models still need many. Smarter learning would cut this gap.

- Interpretability: We do not know why models pick some actions. Clearer internals help safety and speed trust.

The cautious side also warns against cherry-picked demos. Passing one tough test does not mean broad skill. General intelligence should transfer across domains without heavy retuning. That is rare today. Some track forecasts day by day to avoid bias. For that perspective, see the rolling snapshots on The AGI Clock.

Practical advice from the slow camp:

- Expect stepwise gains, not a single flip. Plan for steady integration into work, with controls.

- Invest in evals and audits that track generalization, not just benchmarks. Focus on failure modes.

- Push for new ideas in memory, planning, and causal learning. Scale alone might stall without them.

Both sides agree on one thing. Real progress shows up in systems that act, not just talk. Keep your eyes on agent performance, safety practices, and cost curves. Those signals will tell you whether we see a near-term leap or a measured climb.

Conclusion

AGI means broad skill, fast learning, clear reasons, and steady self-checks. It is not today’s narrow tools. The path ran from early rules, through winters, to data and scale. Recent gains come from agents, tool use, and longer context. Timelines split, near term for some, mid-century for many.

Plan with that split in mind. Build pilots that show work, track steps, and log risk. Pair models with audits, memory rules, and clear permissions. Watch cost curves, safety practices, and agent results on live tasks. For policy and trade, see the global impact of AI diplomacy on economies.

Stay close to reliable sources and public evals. Join local forums, standards groups, or civic boards. Share real outcomes, not demos. Ask your teams to report failures and fixes each week. Treat safety like uptime, measured and owned.

As of October 2025, the signal is progress with gaps. The next moves are simple and hard at once. Test, measure, and ship with care. Keep people in the loop for high-stakes steps. If we do that, AGI will serve broad goals, not narrow wins.